Ailing Zeng 曾爱玲

|

Biography

I'm a technical staff member at Anuttacon, leading the development of a human-centric interactive multimodal video generation system. These models enable agents to perceive, interact with, and generate real-time, long-horizon video behaviors. Previously, I spent three wonderful years at Tencent Hunyuan&AI Lab and International Digital Economy Academy (IDEA), leading a human-centric perception and generation research team. I obtained my Ph.D. from the Department of Computer Science and Engineering, the Chinese University of Hong Kong, supervised by Prof. Qiang Xu. I was a visiting scholar in the Robotics Institute, Carnegie Mellon University.

Some previous research works,

1) Human-centric visual perception with large-scale data and generic models: IDOL, AiOS, SMPLer-X, OSX, DW-Pose, ED-Pose, SmoothNet, DeciWatch

2) Large-scale multi-modality datasets: Motion-X, UBody, Uni-KPT, BallPlay, HuMMan, Human-Art

3) Human-centric generative models: MotionCraft, HumanSD, PhysHOI, Dreamwaltz, HumanTOMATO, DiffSHEG

4) Interactive AI & Human-in-the-loop techniques: X-Pose, Click-Pose, Grounded-SAM

5) Previously, time series analysis and forecasting: LTSF-Linear, SCINet, FITS

We are hiring full-time researchers, engineers, and interns based in Mountain View or Singapore, see our open roles. Feel free to reach out if you are interested.

News

[2026.04] We introduce LPM 1.0, a video-based character performance model that generates real-time video with full-duplex conversation, identity-consistent infinite-length generation, and nuanced human-like performance: [Project Page] [Paper].

[2026.01] We are hosting the ICLR 2026 Tutorial on AI with Recursive Self-Improvement, welcome to submit papers: [Link].

[2025] 5 papers were accepted to ICCV/CVPR/AAAI/ICML 2025, 4 papers were accepted to TPAMI.

[2025.10] Invited talk on the "1st Workshop on Interactive Human-centric Foundation Models", ICCV 2025.

[2025.10] We are hosting the SIGGRAPH Asia 2025 Workshop on " Towards Embodied Intelligence Across Humans, Avatars, and Humanoid Robotics".

[2025.03] Please check and register our AI-Native Game project Whispers from the Star, stay tuned!

[2025.02] Invited talk on video generation at the Max Planck Institute for Intelligent Systems, hosted by Michael J. Black.

[2024] 13 papers were accepted to CVPR/ICML/ICLR/ECCV/SIGGRAPH-Asia/NeurIPS 2024.

[2024.10] I serve as an Area Chair for CVPR 2025.

[2024.05] LTSF-Linear was selected as the most Influential Paper in AAAI 2023!

[2024.04] We are hosting the ECCV 2024 Tutorial on “ Recent Advances in Video Content Understanding and Generation” (VENUE).

[2023.12] We are hosting the CVPR 2024 Workshop on “ Computer Vision with Humans in the Loop” (CVHL).

[2023] 13 papers were accepted to CVPR/ICLR/NeurIPS/ICCV/AAAI 2023.

Selected Research

See full list at Google Scholar. (*equal contribution, #corresponding author or project lead)

|

Ailing Zeng#, Casper Yang, Chauncey Ge, Eddie Zhang, Garvey Xu, Gavin Lin, Gilbert Gu, Jeremy Pi, Leo Li, Mingyi Shi, Sheng Bi, Steven Tang, Thorn Hang, Tobey Guo, Vincent Li, Xin Tong#, Yikang Li, Yuchen Sun, Yue (R) Zhao, Yuhan Lu, Yuwei Li, Zane Zhang, Zeshi Yang, Zi Ye technical report, 2026 LPM 1.0 generates real-time video with full-duplex conversation, identity-consistent infinite-length generation, and nuanced human-like performance. |

|

Ailing Zeng*, Yuhang Yang*, Weidong Chen, Wei Liu technical report, 2024 A systemantic empirical study on 21 SORA-like text-to-video, image-to-video, video-to-video models. |

|

Yiyu Zhuang*, Jiaxi Lv*, Hao Wen*, Qing Shuai, Ailing Zeng#, Hao Zhu#, Shifeng Chen, Yujiu Yang, Xun Cao, Wei Liu CVPR, 2025 Rethink 3D human reconstruction from an image via a feed-forward ViT model trained on 100K multi-view subjects, making the model fast, photo-realistic, and generalizable. |

|

Yinhuai Wang, Qihan Zhao, Runyi Yu, Ailing Zeng#, Jing Lin, Zhengyi Luo, Hok Wai Tsui, Jiwen Yu, Xiu Li, Qifeng Chen, Jian Zhang#, Lei Zhang, Ping Tan CVPR, 2025 It proposes a unified reward to learn diverse basketball skills for physically and dynamically plausible humanoid-ball motion simulation from the proposed human-ball motion datasets. |

|

Yuxuan Bian, Ailing Zeng#, Xuan Ju, Xian Liu, Zhaoyang Zhang, Wei Liu, Qiang Xu AAAI, 2025 MotionCraft unifies (text2motion, speech2gesture, music2dance) whole-body motion generation within a plug-and-play multimodal DiT model. |

|

Yukun Huang*, Jianan Wang*, Ailing Zeng, Zheng-Jun Zha, Lei Zhang, Xihui Liu Extended Version of DreamWaltz [Neurips 2023] It creates high-quality text-driven 3D avatars and expressive whole-body animation via the proposed Skeleton-guided Score Distillation and Hybrid 3D Gaussian Avatar Representation. |

|

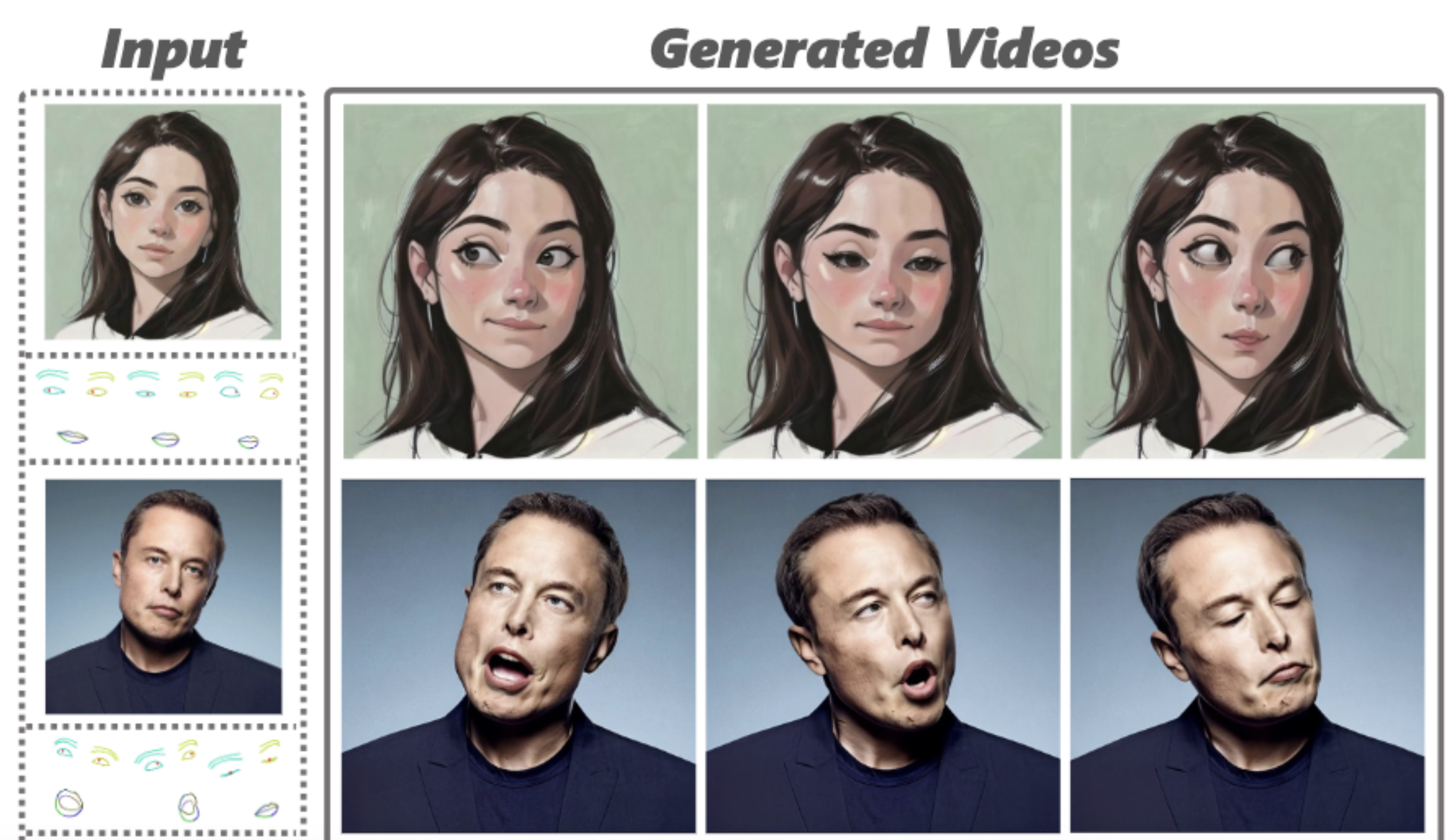

Yue Ma, Hongyu Liu, Hongfa Wang, Heng Pan, Yingqing He, Heng Pan, Junkun Yuan, Ailing Zeng, Chengfei Cai, Heung-Yeung Shum, Wei Liu, Qifeng Chen SIGGRAPH Asia, 2024 A keypoint controllable diffusion-based framework for expressive image portrait animation. |

|

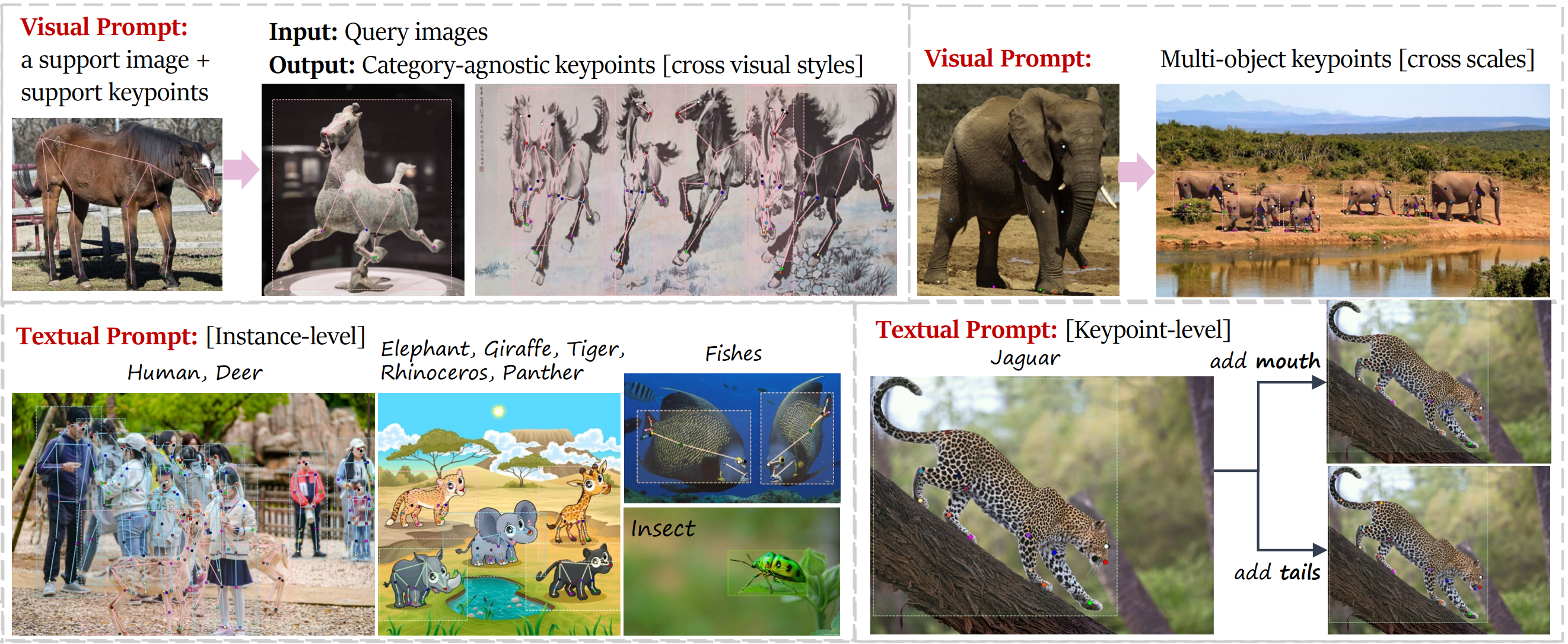

Jie Yang, Ailing Zeng#, Ruimao Zhang#, Lei Zhang European Conference on Computer Vision (ECCV), 2024 UniPose supports textual and visual prompts to detect arbitrary keypoints (e.g., from articulated, rigid, to soft objects). |

|

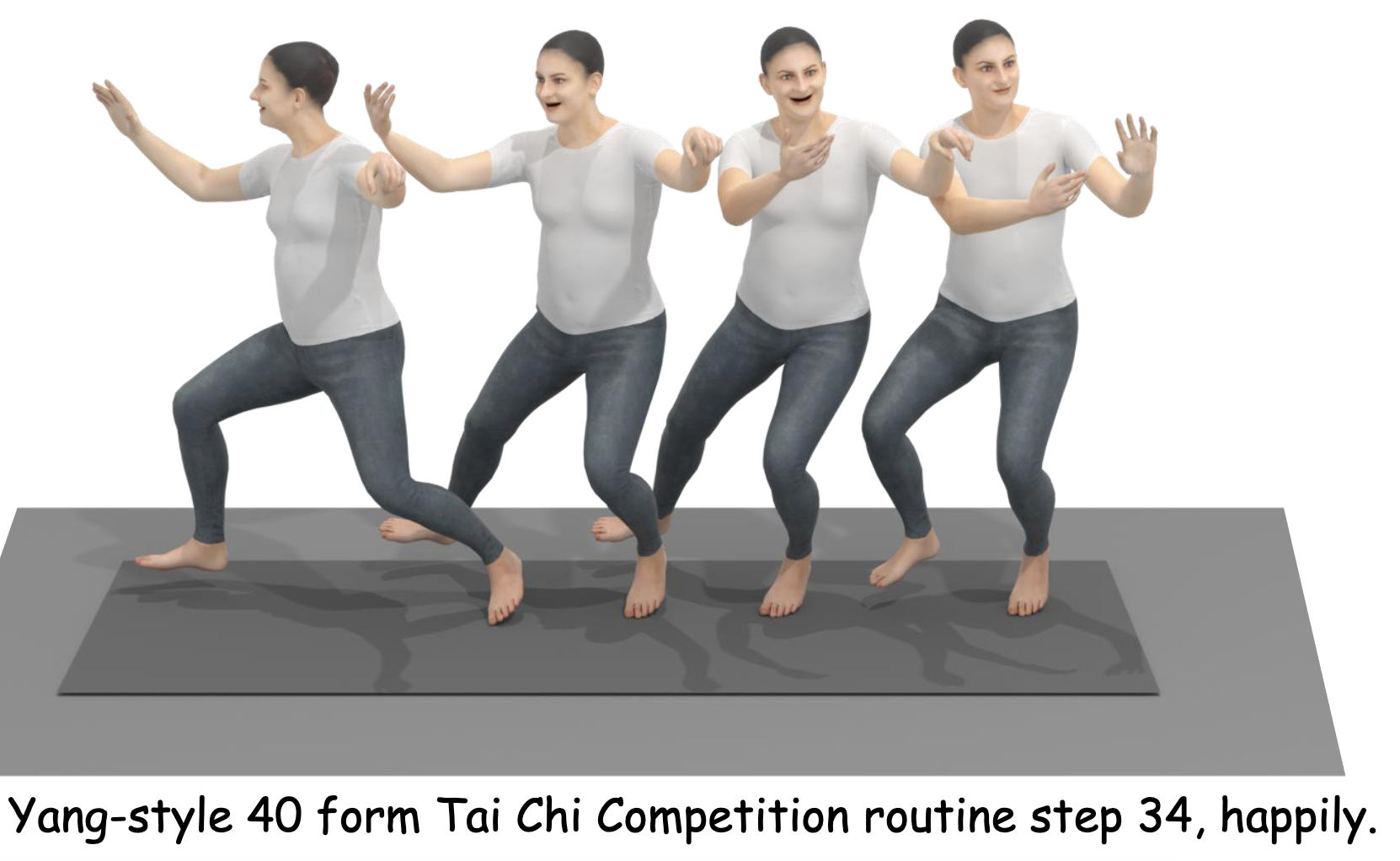

Shunlin Lu*, Ling-Hao Chen*, Ailing Zeng#, Jing Lin, Ruimao Zhang#, Lei Zhang, Heung-Yeung Shum# The 41st International Conference on Machine Learning (ICML), 2024 The first text-driven whole-body motion generation method with an explicit text-motion alignment. |

|

Tianhe Ren, Shilong Liu, Ailing Zeng, Jing Lin, Kunchang Li, He Cao, Jiayu Chen, Xinyu Huang, Yukang Chen, Feng Yan, Zhaoyang Zeng, Hao Zhang, Feng Li, Jie Yang, Hongyang Li, Qing Jiang, Lei Zhang Technical report, 2024 Combining the segment anything model (SAM) with X, e.g., Grounding DINO or OSX for various visual tasks. |

|

Yinhuai Wang, Jing Lin, Ailing Zeng#, Zhengyi Luo, Jian Zhang#, Lei Zhang Technical report, 2023 The physics-based whole-body Human-Object Interaction (HOI) imitation approach for dynamic HOI and a proposed BallPlay dataset. |

|

Qingping Sun*, Yanjun Wang*, Ailing Zeng, Wanqi Yin, Chen Wei, Wenjia Wang, Haiyi Mei, Chi Sing Leung, Ziwei Liu, Lei Yang, Zhongang Cai Rank Top-1 on AGORA SMPL-X leaderboard! [link] The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024 An end-to-end DETR-based model for multi-person whole-body mesh recovery. |

|

Jiong Wang, Fengyu Yang, Wenbo Gou, Bingliang Li, Danqi Yan, Ailing Zeng, Yijun Gao, Junle Wang, Yanqing Jing, Ruimao Zhang The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024 The first large-scale real-world multi-view dataset comprises 11M frames from 8k sequences captured by synchronizing 8 smartphones. |

|

|

Xuangeng Chu, Yu Li, Ailing Zeng, Tianyu Yang, Lijian Lin, Yunfei Liu, Tatsuya Harada The Twelfth International Conference on Learning Representations (ICLR), 2024 A framework for 3D head avatar reconstruction from one or several images in a single forward pass. |

|

Jing Lin∗, Ailing Zeng∗#, Shunlin Lu∗, Yuanhao Cai, Ruimao Zhang, Haoqian Wang, Lei Zhang Conference on Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track, 2023 A large-scale 3D expressive whole-body human motion dataset (over 10M frames) with SMPL-X, text, audio, and RGB modalities. |

|

Zhongang Cai*, Wanqi Yin*, Ailing Zeng, Chen Wei, Qingping Sun, Yanjun Wang, Hui En Pang, Haiyi Mei, Mingyuan Zhang, Lei Zhang, Chen Change Loy, Lei Yang, Ziwei Liu Conference on Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track, 2023 Rank Top-1 on 7 SMPL/SMPL-X benchmarks! SMPL-X estimation with a systematic investigation on 32 datasets and model scaling-up. |

|

Yukun Huang*, Jianan Wang*, Ailing Zeng, He Cao, Xianbiao Qi, Yukai Shi, Zheng-Jun Zha, Lei Zhang Conference on Neural Information Processing Systems (NeurIPS), 2023 A text-driven 3D animatable avatar creation framework with complex scenes. |

|

Zhendong Yang, Ailing Zeng, Chun Yuan, Yu Li The Thirty-Fourth IEEE/CVF Conference on International Conference on Computer Vision (ICCV), CV4Metaverse Workshop, 2023 Rank Top 1 on COCO-WholeBody Benchmark. A better alternative to OpenPose. |

|

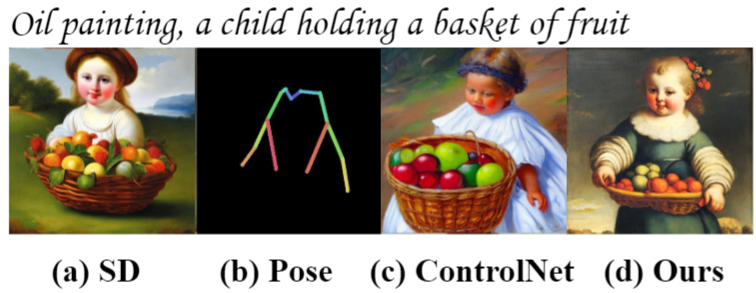

Xuan Ju*, Ailing Zeng*, Chenchen Zhao*, Jianan Wang, Lei Zhang, Qiang Xu (Oral) The Thirty-Fourth IEEE/CVF Conference on International Conference on Computer Vision (ICCV), 2023 HumanSD highlights multi-scenario human-centric image generation with precise pose control. |

|

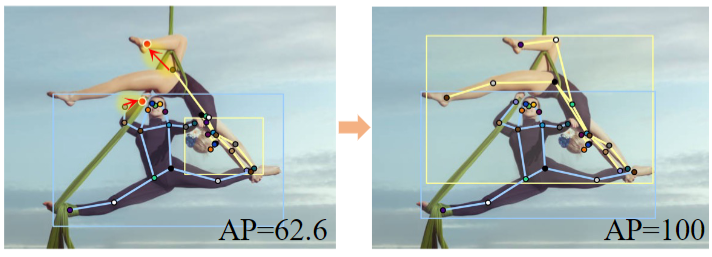

Jie Yang, Ailing Zeng#, Feng Li, Shilong Liu, Ruimao Zhang#, Lei Zhang The Thirty-Fourth IEEE/CVF Conference on International Conference on Computer Vision (ICCV), 2023 The first end-to-end neural interactive keypoint detection/annotation framework significantly reduces 10+ times labeling costs. |

|

Rank Top-1 on AGORA SMPL-X leaderboard! (2023.04) [link] Jing Lin, Ailing Zeng#, Haoqian Wang, Lei Zhang, Yu Li The Thirty-Fourth IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023 The first one-stage framework for 3D whole-body mesh recovery and an large-scale upper-body dataset. |

|

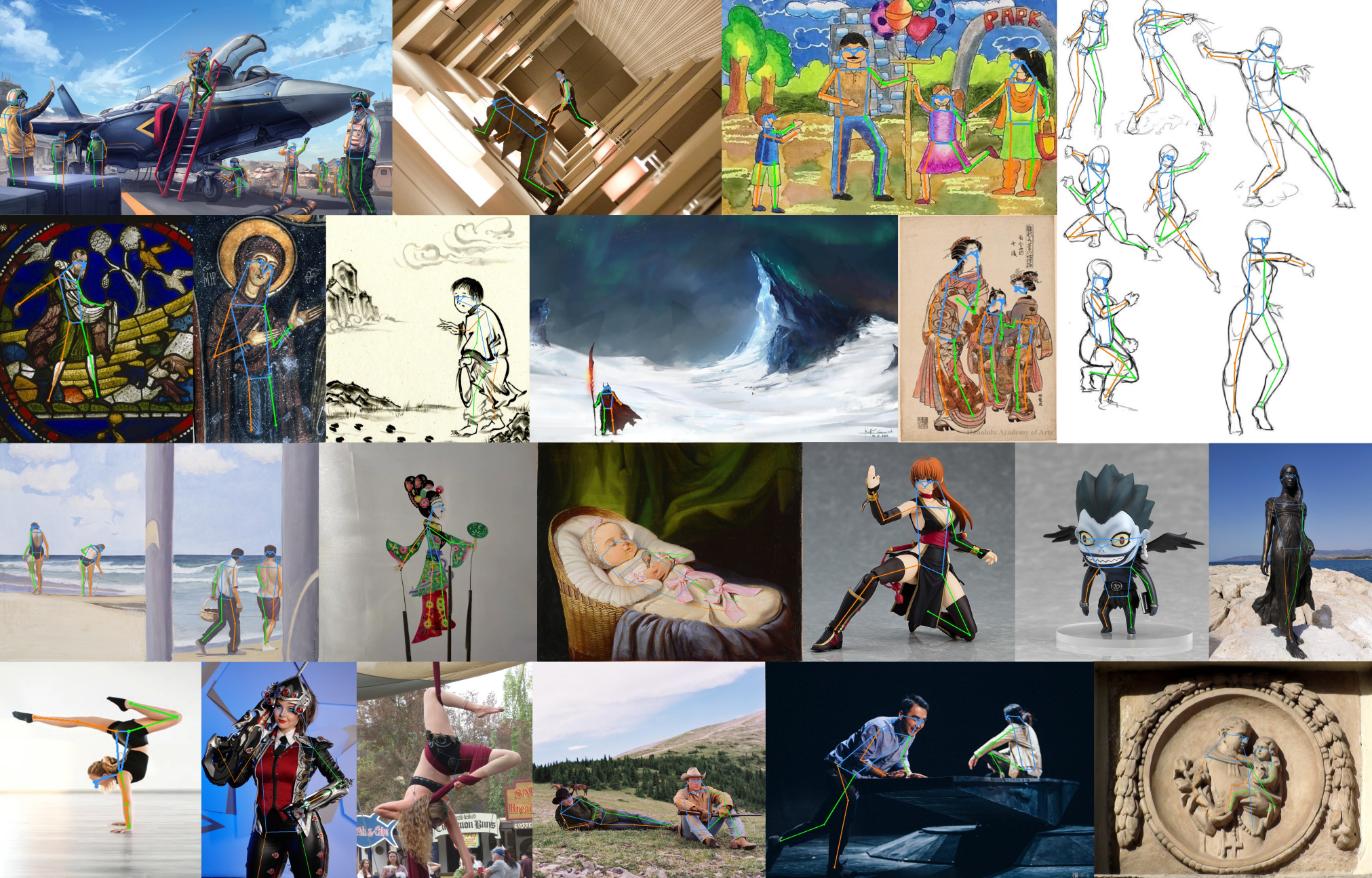

Xuan Ju, Ailing Zeng#, Jianan Wang, Qiang Xu, Lei Zhang The Thirty-Fourth IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023 Human-Art contains 50k high-quality images with over 123k person instances from 5 natural and 15 artificial scenarios, which are annotated with bounding boxes, keypoints, self-contact points, and text information for humans represented in both 2D and 3D. |

|

Jie Yang, Ailing Zeng#, Shilong Liu, Feng Li, Ruimao Zhang#, Lei Zhang Eleventh International Conference on Learning Representations (ICLR), 2023 We present a novel end-to-end framework with Explicit box Detection for multi-person Pose estimation. |

|

[bilibili]

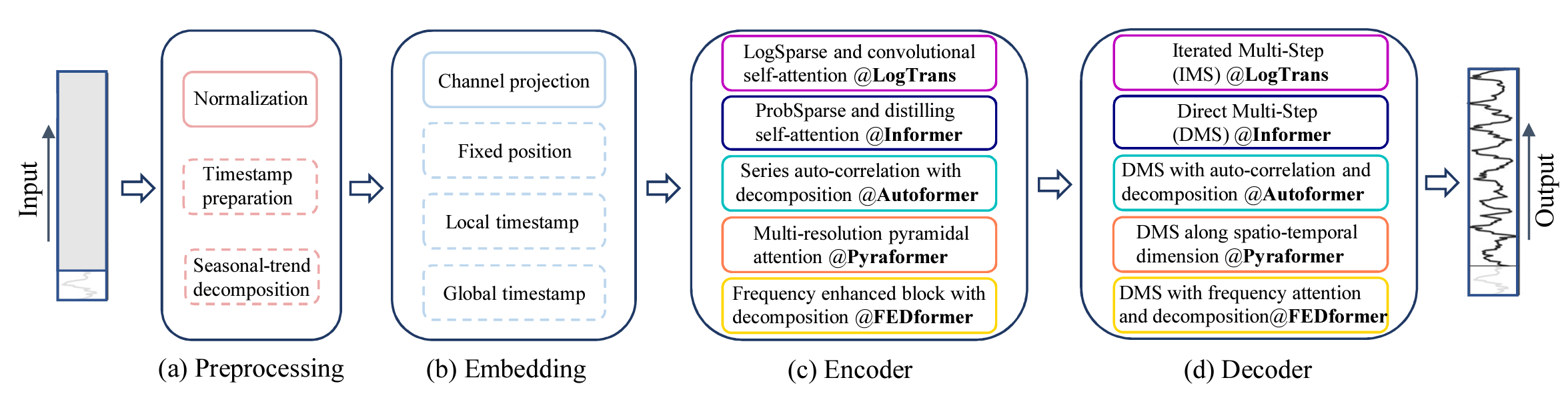

[bilibili] Ailing Zeng, Muxi Chen, Lei Zhang, Qiang Xu (Oral) the most Influential Paper in AAAI 2023, Thirty-Seventh AAAI Conference on Artificial Intelligence (AAAI), 2023 We try to question the validity of Transformer-based time series forecasting solutions via a linear layer. |

|

[Data]

[Data]Ailing Zeng, Xuan Ju, Lei Yang, Ruiyuan Gao, Xizhou Zhu, Bo Dai, Qiang Xu European Conference on Computer Vision (ECCV), 2022 DeciWatch achieves 10+ efficiency improvement over existing works without any performance degradation for video-based 2D/3D human pose estimation. |

|

[知乎]

[知乎]Ailing Zeng, Lei Yang, Xuan Ju, Jiefeng Li, Jianyi Wang, Qiang Xu European Conference on Computer Vision (ECCV), 2022 SmoothNet is a plug-and-play refinement network to improve temporal smoothness and per-frame precision of any existing pose estimators. |

|

Zhongang Cai*, Daxuan Ren*, Ailing Zeng*, Zhengyu Lin*, Tao Yu*, Wenjia Wang*, Xiangyu Fan, Yang Gao, Yifan Yu, Liang Pan, Fangzhou Hong, Mingyuan Zhang, Chen Change Loy, Lei Yang^, Ziwei Liu^ (Oral) European Conference on Computer Vision (ECCV), 2022 HuMMan is a large-scale multi-modal 4D human dataset with 1000 human subjects, 400k sequences and 60M frames. |

|

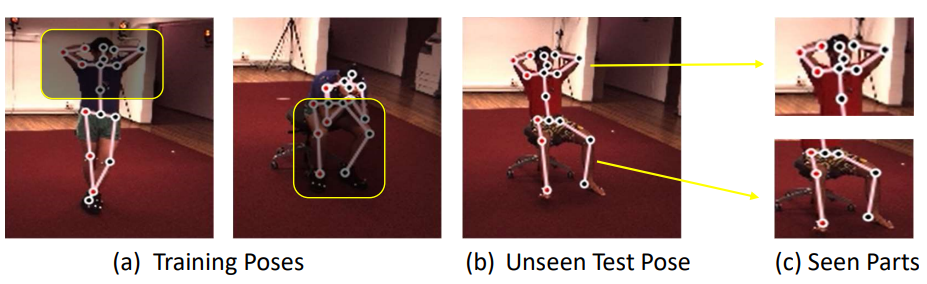

Ailing Zeng, Xiao Sun, Fuyang Huang, Minhao Liu, Qiang Xu, Stephen Lin 2020 European Conference on Computer Vision (ECCV’20) We design a split-and-recombine approach to improve generalization performance in 3D human pose estimation, especially on rare and unseen poses. |

Honors & Awards

KAUST AI Rising Star, 2025

Shenzhen Artificial Intelligence Natural Science Award, 2023

Shenzhen Pengcheng special talent award, 2023

Full Postgraduate Studentship, CUHK (2017 - 2021)

Excellent League Member, Top 1% student of Xiamen University

National Scholarship (2015)